By Daniel Dunaief

Scientists rarely have people standing at their lab door, waiting eagerly for the results of their studies the way the public awaits high-profile verdicts.

That, however, changed over the last 16 months, as researchers, public health officials, school administrators and a host of others struggled to understand every aspect of the basic and translational science involved in the Sars-Cov2 virus, which caused the COVID-19 pandemic.

With people becoming infected, hospitalized and dying at an alarming rate, businesses closing and travel, entertainment and sporting events grinding to a halt, society looked to scientists for quick answers. One challenge, particularly in the world of scientific publishing, is that quick and answers don’t often mesh well in the deliberate, careful and complicated world of scientific publishing.

The scientific method involves considerable checking, rechecking and careful statistically relevant analysis, which is not typically designed for the sharing of information until other researchers have reviewed it and questioned the approach, methodology and interpretation.

The pandemic changed that last year, increasing the importance of preprint servers like bioRxiv and medRxiv at Cold Spring Harbor Laboratory, which provide a way for researchers to share unfiltered and unchecked information quicker than a scientific review and publishing process that can take months or even years.

The pandemic increased the importance of these preprint servers, enabling scientists from all over the world to exchange updated research with each other, in the hopes of leading to better basic understanding, diagnosis, treatment and prevention of the spread of the deadly virus.

The importance of these servers left those running them in a bind, as they wanted to balance between honoring their mission of sharing information quickly and remaining responsible about the kinds of information, speculation or data that might prove dangerous to the public.

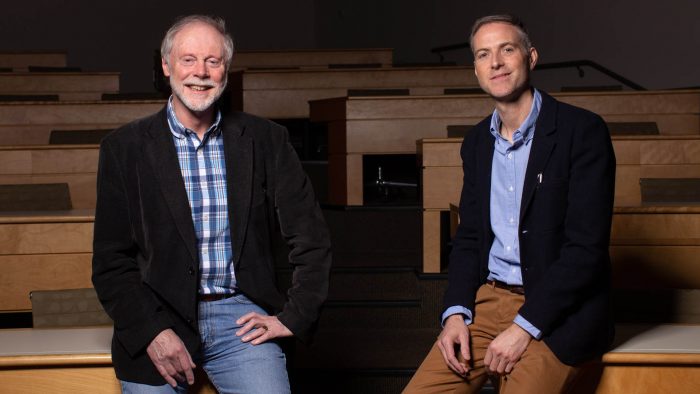

Richard Sever and John Inglis, Assistant Director and Executive Director of Cold Spring Harbor Laboratory Press, created pandemic-specific criteria for work reporting potential Covid-19 therapies.

“Manuscripts making computational predictions of COVID-19 therapies are accepted only if they also include in vitro [studies in test tubes or with live cells] or in vivo [studies in live subjects] work,” the preprint directors wrote in a recent blog. “This restriction does not apply to non-covid-19 work.”

Inglis and Sever continue to decline research papers that might cause people to behave in ways that compromise public health.

“We are simply doing our best to tread carefully in the early days of clinical preprints, as we gain experience and bias our actions toward doing no harm” the authors wrote in their blog.

In the first few months after the pandemic hit the United States, the pace at which scientists, many of whom pivoted from their primary work to direct their expertise to the public health threat, was the highest bioRxiv, which was founded in November of 2013, and medRxiv, which was started in June of 2019, had ever experienced.

These preprint servers published papers that wound up leading to standards of care for COVID-19, including a June research report that appeared on June 22nd in medRxiv on the use of the steroid dexamethasone, which was one of the treatments former President Donald Trump received when he contracted the virus.

The rush to publish information related to the virus has slowed, although researchers have still posted over 16,000 papers related to the virus through the two pre-print servers. MedRxiv published 12,400 pandemic-related papers since January of 2020, while bioRxiv published over 3,600.

At its peak in late March of 2020, medRxiv’s abstract views reached 10.9 million, while downloads of the articles were close to five million.

Currently, bioRxiv is publishing about 3,500 papers a month, while medRxiv put up about 1,300 during a month. Close to 60 percent of the medRxiv papers continue to cover medical issues related to the pandemic.

The numbers of page views are “not anywhere near the frenzy of last year,” Inglis said in an interview.

With the volume of papers still high, people can receive alerts from the preprint servers using parameters like their field of interest or word searches.

“The real question is how to sort out the gold from the dross,” Inglis said. While some people have suggested a star system akin to the one shopping services use, Inglis remained skeptical about the benefit of a scientific popularity contest.

“Have you looked at the stuff [with four or five stars] on Amazon? It’s one thing if you’re buying a widget, but it’s different if you’re trying to figure out what’s worthwhile science,” he said.

Other organizations have reviewed preprints, including the Bloomberg School of Public Health at Johns Hopkins.

“By sheer diligence, the [Johns Hopkins team] go into medRxiv mostly and simply pick out things they think are striking,” Inglis said.

At the same time, a team of researchers led by Nicolas Vabret, Robert Samstein, Nicolas Fernandez, and Miriam Merad created the Sinai Immunology Review Project, which provides critical reviews of articles from the Cold Spring Harbor Laboratory preprint sites. The effort ranks COVID-related preprints according to their immunological relevance. Fernandez created a dedicated website to host and integrate the reviews. The group also worked with Nature Reviews Immunology to publish short weekly summaries of preprints, according to a comment piece in that journal.

BioRxiv and medRxiv were founded on the belief that early sharing of results as preprints would speed progress in biomedical research, better equipping scientists to build on each other’s work.

“My team is proud to have contributed to the response to this worldwide human tragedy,” Inglis said. “We’re also glad we made the decision to set up a separate server for health science, in which the screening requirements are different and more stringent.”

Inglis explained that the pre-print servers have “learned a lot in the past year” about providing information during a crisis like the pandemic. “If another pandemic arose, we’d apply these learnings and respond immediately in the same way.”