By Daniel Dunaief

Ideally, doctors would like to know about health threats or dangers such as diseases or chronic conditions before they threaten a person’s quality of life or expected lifespan.

On a larger scale, politicians and planners would also like to gauge how people are doing, looking for markers or signs that something may be threatening the health or safety of a community.

Researchers in computer science at Stony Brook University have been designing artificial intelligence programs that explore the language used in social media posts as gauges of mental health.

Recently, lead author Matthew Matero, a PhD student in Computer Science at Stony Brook; senior author H. Andrew Schwartz, Associate Professor in Computer Science at Stony Brook; National Institute on Drug Abuse data scientist Salvatore Giorgi; Lyle H. Ungar, Professor of Computer and Information Science at the University of Pennsylvania; and Brenda Curtis, Assistant Professor of Psychology at the University of Pennsylvania published a study in the journal Nature Digital Medicine in which they used the language in social media posts to predict community rates of opioid-related deaths in the next year.

By looking at changes in language from 2011 to 2017 in 357 counties, Schwartz and his colleagues built a model named TrOP (Transformer for Opioid Prediction) with a high degree of accuracy in predicting the community rates of opioid deaths in the following year.

“This is the first time we’ve forecast what’s going to happen next year,” Schwartz said. The model is “much stronger than other information that’s available” such as income, unemployment, education rates, housing, and demographics.

To be sure, Schwartz cautioned that this artificial intelligence model, which uses some of the same underlying techniques as the oft-discussed chatGPT in coming up with a model of ordered data, would still need further testing before planners or politicians could use it to mitigate the opioid crisis.

“We hope to see [this model] replicated in newer years of data before we would want to go to policy makers with it,” he said.

Schwartz also suggested that this research, which looked at the overall language use in a community, wasn’t focused on finding characteristics of individuals at risk, but, rather at the overall opioid death risks to a community.

Schwartz used the self-reported location in Twitter profiles to look at representation of a community.

The data from the model, which required at least 100 active accounts each with at least 30 posts, have proven remarkably effective in their predictions and hold out the potential not only of encouraging enforcement or remediation to help communities, but also of indicating what programs are reducing mortality. Their model forecast the death rates of those communities with about a 3 percent error.

Both directions

Schwartz explained that the program effectively predicted positive and negative changes in opioid deaths.

On the positive side, Schwartz said language that reflected a reduction in opioid mortality included references to future social events, vacation, travel and discussions about the future.

Looking forward to travel can be a “signal of prosperity and having adventures in life,” Schwartz said. Talking about tomorrow was also predictive. Such positive signals could also reflect on community programs designed to counteract the effect of the opioid epidemic, offering a way of predicting how effective different efforts might be in helping various communities.

On the negative side, language patterns that preceded increases in opioid deaths included mentions of despair and boredom.

Within community changes

Other drug and opioid-related studies have involved characterizing what distinguishes people from different backgrounds, such as educational and income levels.

Language use varies in different communities, as words like “great” and phrases like “isn’t that special” can be regional and context specific.

To control for these differences, Schwartz, Matero and Giorgi created an artificial intelligence program that made no assumptions about what language was associated with increases or decreases. It tested whether the AI model could find language that predicted the future reliably, by testing against data the model had never seen before.

By monitoring social media in these specific locales over time, the researchers can search for language changes within the community.

Scientists can explore the word and phrases communities used relative to the ones used by those same communities in the past.

“We don’t make any assumptions about what words mean” in a local context, Schwartz said. He can control for language differences among communities by focusing on language differences within a community.

Schwartz recognized that fine refinements to the model in various communities could enhance the predictive ability of the program.

“If we could fully account for differences in cultural, ethnic and other variables about a community, we should be able to increase the ability to predict what’s going to happen next year,” he said.

With its dependence on online language data, the model was less effective in communities where the number of social media posts is lower. “We had the largest error in communities with the lowest rates of posting,” Schwartz explained. On the opposite side, “we were the most accurate in communities with the highest amounts” of postings or data.

Broader considerations

While parents, teachers and others sometimes urge friends and their children to limit their time on social media because of concerns about its effects on people, a potential positive is that these postings might offer general data about a community’s mental health. The study didn’t delve into individual level solutions, but these scientists and others have work that suggests this is possible.

As for his future work, Schwartz said he planned to use this technique and paradigm in other contexts. He is focusing on using artificial intelligence for a better understanding of mental health.

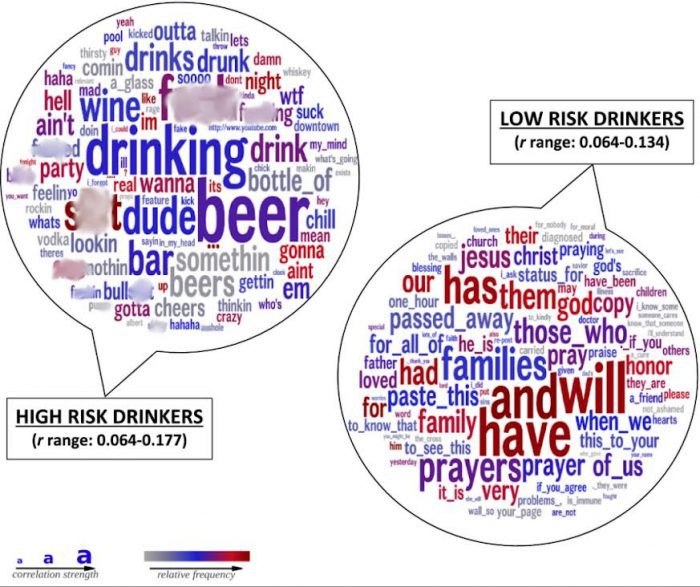

“We hope to take this method and apply it to other outcomes, such as depression rates, alcohol use disorder rates,” post traumatic stress disorder and other conditions, Schwartz said. “A big part of the direction in my lab is trying to focus on creating AI methods that focus on time based predictions.”