By Heidi Sutton

You’re seven years old. Your mother is in the hospital. Your father said she’s “done something stupid.”

Thus begins the remarkable one-man play, Every Brilliant Thing. Written by Duncan MacMillan with Jonny Donahoe, the story starts in 1973 as a young boy finds out his mother has attempted suicide. In response, he begins to make a list of everything brilliant about the world, everything worth living for — 1. Ice cream, 2. Water fights, 3. Staying up past your bedtime and being allowed to watch TV, 4. The color yellow, 5. Things with stripes. When his mother returns from the hospital, he leaves the list on her pillow in hopes it will help her heal. She corrects his spelling and gives it back to him.

After his mother’s second suicide attempt ten years later, he brings the list out again and continues to add to it until it takes a life of its own. He leaves post-its all over the house in another attempt to reach out to her, to show her that life is truly worth living. When he falls in love with his future wife Sam, the list becomes a gift for her. When he struggles with his own depression, he rediscovers the list one final time until it reaches one million and helps him heal.

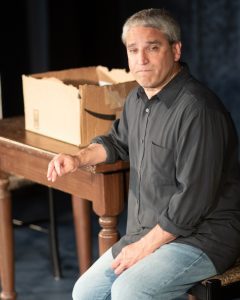

Now, in association with Response Crisis Center, the show heads to Theatre Three’s Ronald F. Peierls Theatre on the Second Stage for its Long Island premiere. Under the direction of Linda May, the show stars Theatre Three’s Executive Artistic Director Jeffrey Sanzel in an incredible performance.

The cabaret-style show recruits members of the audience to join Sanzel on stage to tell the story — the veterinarian who put his childhood dog Bark Twain to sleep — the character’s first experience with death; the father who prefers music over talking; and girlfriend Sam, who he meets in college.

Others participate from their seats — his guidance counselor Mrs. Patterson, his favorite college professor — people who have made a profound difference in his life. Still others, when prompted, call out brilliant things from his growing list — 23. Mighty Mouse, 24. Spaghetti with meatballs, 25. Wearing a cape, 317. Stars Wars, 319. Laughing so hard you shoot milk out of your nose, 731. hammocks, 993. Having dessert as your main course.

Sanzel’s performance is, for lack of a more fitting word, brilliant. His ability to improvise is impressive and his presentation is flawless. The audience, which he draws into the story, hangs on his every word from start to finish. The result is an intimate, funny, sad, emotional, heart-warming and cathartic experience that ends much too soon.

While he works the room, Sanzel pauses often to addresses the audience about suicide prevention and depression:

“It’s important to talk about things — particulary things that are hardest to talk about.”

“It is common for children of suicides to blame themselves. It’s natural.”

“In order to live in the present we have to imagine a future that’s better than our past — because that’s what hope is.”

And the final — “I have some advice for anyone contemplating suicide. It’s really simple advice. Don’t do it — things get better. They might not always get brilliant, but they get better.”

1092. Conversation, 2000. Coffee, 2005. Vinyl records, 9995. Falling in love, One Million. Listening to a record for the first time, turning it over in your hands, placing the needle down … and then sitting and listening while reading through the sleeve notes.

The list (and show) will change the way you see the world. Don’t miss this one.

Theatre Three, 412 Main St., Port Jefferson presents Every Brilliant Thing every Sunday at 3 p.m. through Aug. 28. Running time is one hour with no intermission. All seats are $20 with 50% of the proceeds benefitting the Response Crisis Center. Staff members from the Center will be at each performance to answer questions and provide information. Audiences are encouraged to fill out their own “brilliant things” on provided Post-It notes in the lobby, which will be on display throughout the show’s run. For more information or to order, call 631-928-9100 or visit www.theatrethree.com.

CONTENT WARNING: Although the play balances the struggles of life while celebrating all that is “truly brilliant” in living each day, Every Brilliant Thing contains descriptions of depression, self-harm, and suicide. It is recommended that only audience members 14 and older attend. If you or somebody you know is struggling, call Response 24/7 at 631-751-7500 or the National Lifeline at 1-800-273-8255.